When did the bug start?

When you find a bug, the most important question is usually why is this happening? But there’s an earlier question that makes why much easier to answer: when did this start happening?

Antithesis has some unique tricks for answering this question. Our deterministic simulation testing lets you rewind time for a specific bug, then explore forward again along different timelines. For each new timeline, you see whether you hit the bug again or not. This lets you identify times where the bug becomes much more likely. Then you can check the logs around that point to see what happened.

Our new causality analysis report makes this process easy. We provide a graph of bug probability over time, along with logs that automatically filter to any point of interest you zoom in on.

Previously, this feature was only available as part of a manual analysis process by our Customer Experience team. Today we’re launching an upgraded version that you can launch straight from your main Antithesis triage report for any bug you’re interested in. You’ll see real-time results streaming in as Antithesis explores more branches. And if you’re investigating with an AI agent, it can help analyze the report for you.

Toy bug: flipping coins

To see how causality analysis works, we’ll start with a simple toy example of a program that simulates flipping two coins one after another, and then checks the result later. If both coins come up heads, we count it as a bug.

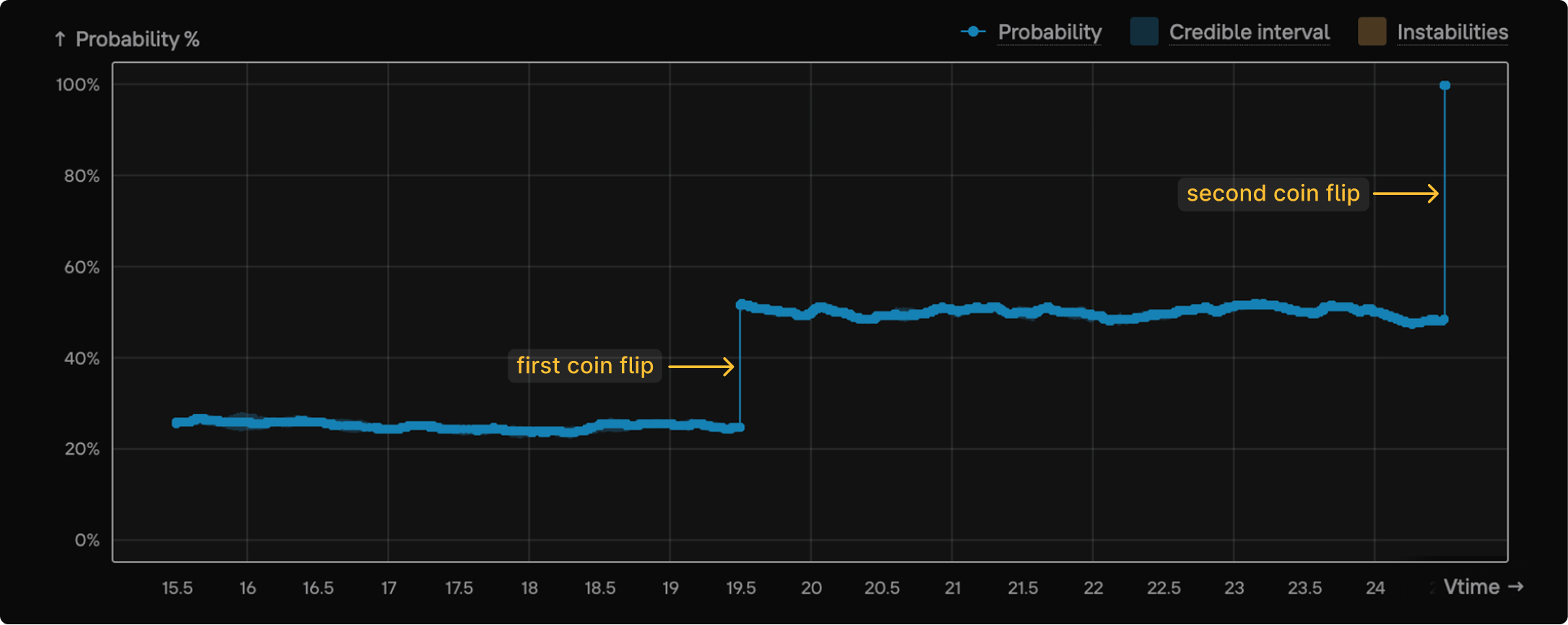

We take a single timeline where we saw the bug and rewind time to various points. Then from each point we let Antithesis run the simulation forward multiple times, injecting different patterns of faults, and see if we still hit the bug. Putting all this information together gives us a graph of bug probability against time.1 Here’s the graph, with the coin flips labelled:

To interpret this graph, it’s easiest to work backwards from the latest time:

- If you rewind back to a point after the second flip, you know that both coins came up heads. At this point the bug is “baked in”: you’re guaranteed to see it when you check, and so the probability is 100%.

- If you rewind to somewhere between the first and second flip, you know that the first coin came up heads. But as Antithesis explores forwards again, the second coin flip could be either heads or tails, leading to a bug probability of roughly 50%.

- If you rewind to before the first flip, each coin flip could be either heads or tails, leading to a bug probability of roughly 25%.

The great thing about the graph is that the coin flips immediately jump out at you as literal jumps in probability. That’s a strong signal that you should check the logs at those points to see what happened.

Real bug: stale reads in etcd

Now let’s look at a more interesting real-world bug that Antithesis found in etcd, a distributed key-value store that’s used as the primary database for Kubernetes and other systems.

This was an extremely rare, hard-to-trigger bug where etcd returned stale data to a client that requested the most up-to-date value of a key. It was only found once in about every 19 days of testing with Antithesis.2

That’s a long time to wait to see a bug. And that’s with Antithesis’ intelligent fault injection and exploration — traditional chaos testing would effectively never reproduce this bug.

To have any hope of debugging in a reasonable time frame, we needed to find a way to make it happen more often.

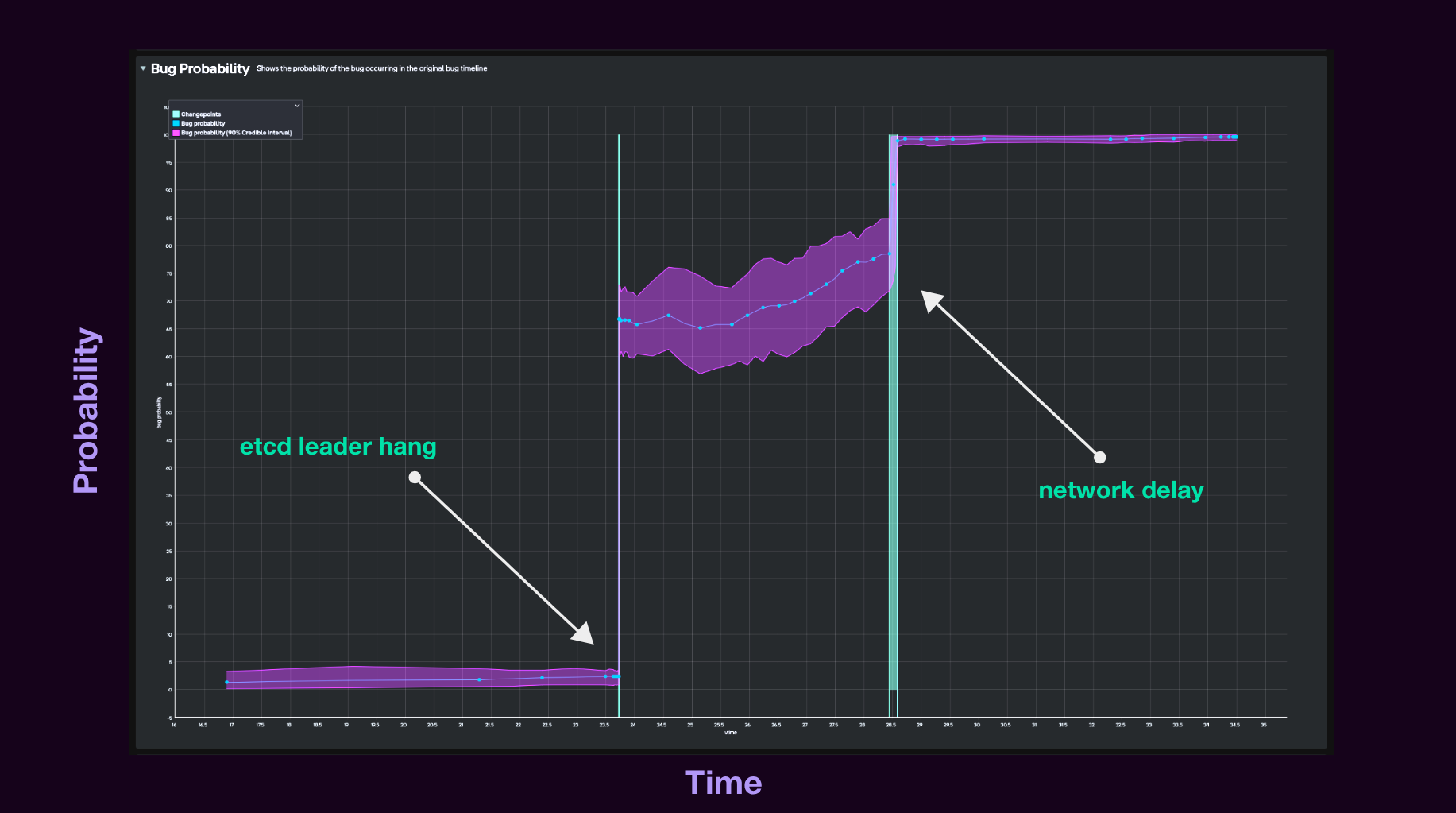

To understand the situation better, we kicked off a causality analysis report for a specific instance of the bug. This gave us the following graph:3

You can see two sharp jumps in probability. After the second jump, the probability of seeing the bug is 100%. So we know that something happens at this point that locks the bug in. Before the first jump, the probability of Antithesis finding the bug when it explores forward is close to 0%, which fits with the fact that the bug is extremely rare.

To see what caused the jumps, we checked the logs and found that they corresponded to points where Antithesis added faults: a container hang at the time of the first jump, and a network delay at the time of the second.

We tuned Antithesis to perform these types of faults more frequently, and started seeing the bug twenty times more often. Still rare, but once a day is a big improvement!

This extra data helped pinpoint the exact chain of events that led to the bug. To understand what went wrong, let’s look at how reads normally work in etcd. When a client requests data from a follower node, the follower asks the leader for the latest commit index. The leader confirms with the rest of the cluster that it’s still the leader, then replies. The follower then waits to catch up to that index before returning the data to the client.

In the buggy timeline, the leader hangs and the confirmation request times out. A retry mechanism (added in etcd 3.5) re-sends it, but reuses the same request identifier. Meanwhile, the rest of the cluster elects a new leader. When the old leader wakes up, it doesn’t realise it’s been replaced.

Next, the network delay holds up the retry just long enough for an unrelated read request to arrive at the old leader first. When the retry finally arrives with its duplicate identifier, a delayed response from the cluster to the original confirmation request comes back. The stale leader mistakenly treats it as confirming the newer request too.

The duplicate identifier is what made this bug possible: the leader couldn’t distinguish the retry from the original. So the fix was to use a unique identifier for each retry.

Try it on your own bugs

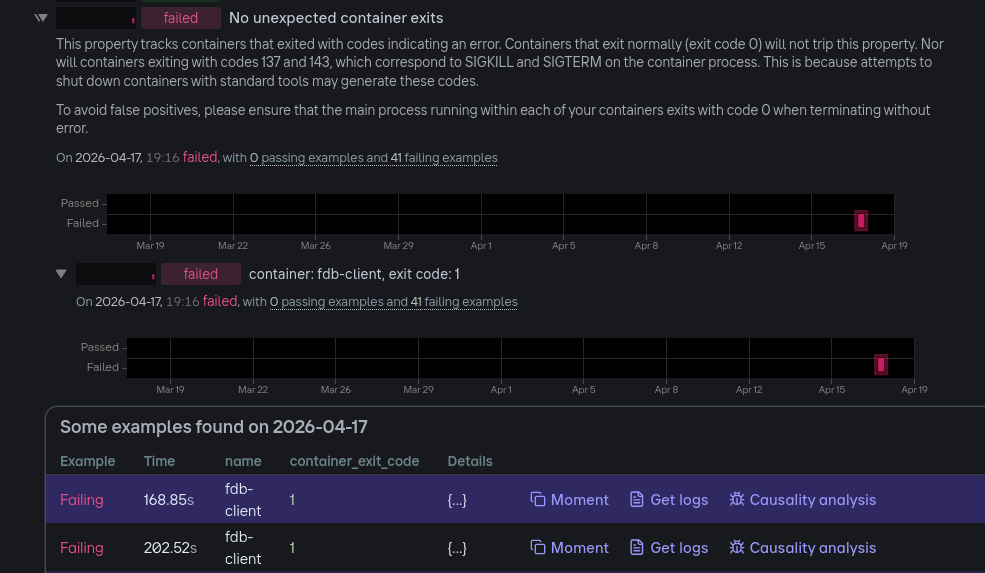

Next time you’re looking at a tricky bug in Antithesis, try out causality analysis. You can launch the analysis directly from a specific example in the Properties section of your triage report, by clicking Causality analysis:

If you’re using an AI agent, you can also give it the report URL and use Antithesis agent skills to help interpret the result for you.

Watch the graph update in real time as new results stream in. To view just the logs for a specific time window, simply zoom in to that part of the graph.

If you’re not yet using Antithesis, get in touch to see how we can help you find and understand your own bugs.