A declarative restoration

Our current testing practices are failing us For all the time, money, and human labor we pour into building comprehensive test suites and writing detailed test cases, we still suffer from catastrophic bugs, embarrassing and costly production outages, and critical security vulnerabilities that always seem like they should have been caught earlier.

The sheer scale of our effort is not commensurate with the fragility of what’s in production. I started writing this in the middle of a major AWS outage where a DNS issue in a single service cascaded into failures across large parts of the internet. It was yet another reminder that a small number of subtle faults can still bring down enormous systems that are “well tested”.

It’s not every week that half the internet goes down — but we spend so much engineering time on testing and debugging that it’s incredible we don’t have something better. We’ve built an entire industry around processes that offer a thin veneer of quality at the cost of countless dev-years of toil. It is as if we’re building skyscrapers with teaspoons: they look impressive, but why are we still pouring concrete one spoonful at a time?

I read a lot of history in my spare time, so when I look at the situation today, it reminds me of another, equally stark choice about whether to modernize – Japan in 1853, on the brink of the Meiji Restoration. We’ll follow that story forward, from Black Ships in the 19th century to Kubernetes in the 21st, to see how declarative thinking quietly reshaped everything except testing, and why tracing that arc is the key to understanding what has to change next.

Black Ships on the horizon

Edo Bay, 1853. The horizon darkened as iron hulls cut through the morning mist. The “Black Ships” of Commodore Perry had arrived to “negotiate” an end to the isolationist policies of the Tokugawa Shogunate. The steam warships, just four of them, caused such alarm as to inspire this poem:

Breaking the halcyon slumber

of the Pacific;

The steam-powered ships,

a mere four boats are enough

to make us lose sleep at nightThe message for the Shogunate was unmistakable: modernize, or be left behind.

What followed was a bold vision for a new nation, expressed in the Charter Oath of 1868. It laid out five high-level principles that remade an entire country. Within a single generation, Japan underwent one of the fastest industrializations in history, dismantling centuries of feudal hierarchy and transforming into a modern state – what we now call the Meiji Restoration.

Software testing today is in a similar position to Tokugawa Japan. The rest of the industry has changed around us, and if we want testing to keep up, we need to change as well.

The declarative revolution

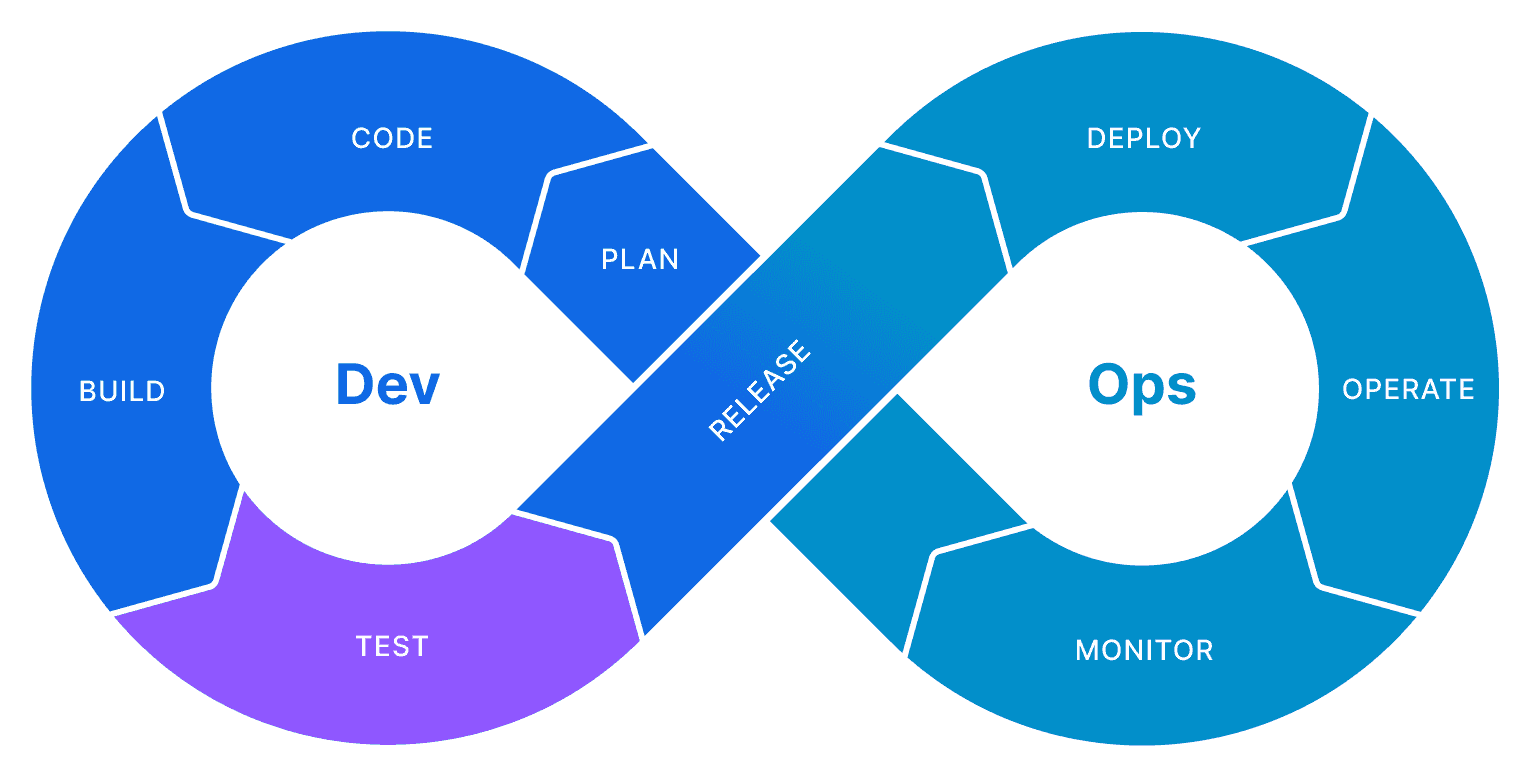

Over the last two decades, software development has gone through a massively successful revolution. It looked like a shift in tooling, but it was really a shift in mindset from imperative (“here’s how to do it”) practices to declarative (“here’s what I want; you figure out how”) ones.

Take infrastructure as an example. Before this shift, servers were like hand-tended gardens: sysadmins SSH’d in one by one, typing commands and running scripts. That approach inevitably produced “configuration drift”: subtle, undocumented differences between development, staging, and production that caused unpredictable failures. Code worked “on my machine” and mysteriously broke in production because no two environments were truly the same. On top of that, there was an invisible wall between feature teams and ops teams: developers threw changes over the wall, operations carried the pager, and velocity slowed to match the slowest part of the system.

The DevOps movement was born to address exactly these problems: brittle manual work, configuration drift, and the wall between dev and ops. It pushed teams to share responsibility for running software and to ship smaller changes more often, instead of rare, risky releases.

Making that vision real created hard requirements for our tools. Manual SSH sessions and one-off scripts had to give way to automation; builds, tests, and deployments needed to run end-to-end without a human in the loop. To keep environments from drifting apart, infrastructure, configuration, and even security policies had to be expressed as code, versioned and reviewed like application changes. Operations themselves had to become idempotent so that rerunning a deploy or an “apply” would converge the system on the right state instead of making things worse.

Once teams started expecting that level of speed and safety, the old imperative practices simply couldn’t keep up. We needed tools that could take a declaration of the desired infrastructure and application state and make reality line up with it, repeatably and reliably. That pressure is what kicked off the “Declarative Revolution” in how we build and run software.

From Puppet to Kubernetes

Early tools like Puppet1 and Ansible let us declare how a server should be configured (“Apache is installed and running,” “this package is present”). They were still focused on individual machines, but they moved us away from ad-hoc shell scripts.

The real inflection point came with tools like Terraform and Kubernetes.

With Terraform, you declare the desired end state of your infrastructure—servers, networks, databases, and their relationships—and let the tool compute a plan to get you there. You don’t write a script that says “create this VM, then that subnet, then that load balancer.” You describe the architecture, and Terraform figures out what needs to change. The same configuration can be applied over and over; if the actual state already matches, nothing happens.

With Kubernetes, we stopped scripting container lifecycles. Instead, we write manifests that say things like:

I want three replicas of this application, exposed behind a service, with this much CPU and memory.The Kubernetes control plane continuously compares the current state to that declared desired state and reconciles the difference. If a pod dies, Kubernetes starts a new one. If a node fails, it reschedules workloads elsewhere. The system is constantly steering itself back toward the intent we declared.

These declarative configurations live in version control. They’re readable, reviewable, and auditable. Anyone can diff “what changed” in the cluster or infrastructure without reverse‑engineering a bash script or an operator’s shell history.

Infrastructure stopped being a collection of fragile, manual incantations and became a declaration of intent that tools work to satisfy.

It’s hard to overstate how much these tools changed day‑to‑day software operations. Most modern teams, even if they don’t think of it this way, already live in a largely declarative world when it comes to infrastructure and application deployment.

Declarative systems, imperative tests

And yet, like Tokugawa Japan in 1853, portions of the software development world remain isolated, stuck in an imperative paradigm. Many engineers are still writing fragile, step-by-step imperative scripts. Worse, an embarrassingly high proportion of testing work is still manual – people meticulously log in, click buttons, and observe stopwatches, asserting outcomes in an error-prone and soul-crushing order. This is the equivalent of SysAdmins manually logging into a server to run a set of scripts. This process is brittle, slow, and does not scale.

I could go on about how this state of affairs developed out of a mindset that devalued testing and quality work – but regardless of why we considered this acceptable, testing is inter-connected with the rest of software development. The cycle can only run as fast as its slowest process.2

Imperative testing practices simply cannot keep up with declarative development, and we see the result in on-call fatigue, developer burnout, and the sheer amount of dev-time that goes into trying to test code.3

And AI agents – in some sense the ultimate in declarative development tools – only make the situation more extreme. With the amount of code they generate, and how hard it is to review and test, it’s not just steamships on the horizon now, Commodore Perry’s brought a nuclear-powered aircraft carrier.

A Meiji Restoration for testing?

While our peers are declaring the desired state of their systems and watching them self-heal, our old imperative testing practices are failing us in this new world. What we need is our own Meiji restoration: a yet‑to‑exist practice of continuous correctness to match continuous integration and continuous deployment. A set of principles and practices that fundamentally shifts us away from the imperative mindset of “how to test” to the declarative mindset of “what should be true.”

Such an approach treats testing not as a checklist of imperative actions but as declarations of truth about the system as a whole, applied across the entire SDLC. Instead of scripting “Click the login button, then type a password,” we declare “A valid user can log in and the dashboard is visible.” This shift from choreographing steps to declaring truths, and the tools and processes required to continuously assert these truths, is the next stage in our industry’s restoration.

Japan’s restoration began with five declarations in the Charter Oath. Likewise, our restoration should begin not with new tools, but with a set of declarations. Testing deserves its own Charter Oath:

-

Declarative tests. We will write tests that declare what must be true about our systems, in a form that is both human‑readable and machine‑checkable. These declarative tests become a single source of truth for the guarantees we care about, shared and understood across every team working on the system.

-

Properties over procedures. We will understand and express system behavior primarily as properties and invariants, not as collections of brittle step‑by‑step procedures. Those properties will be the anchor our tools use to check correctness continuously, even as humans and AI agents change code at ever-faster rates.

-

Leverage the machine, empower the human. We will offload the task of exploring vast state spaces to machines, using automation, fuzzing, and simulation to generate and execute novel scenarios. Freed from the burden of hand‑coding and maintaining countless test scripts, humans will focus on work that requires real understanding, intuition, and creativity – crafting robust systems, expressing their properties, and debugging the rare complex failures that surface.

-

Determinism at every level. We will insist that in our testing environments, any failing execution can be deterministically replayed, from local fuzzing sessions to full‑system simulations, turning rare heisenbugs into ordinary bugs we can understand and fix.

-

Test harder than prod. We will move away from shallow indicators like test counts or code coverage as goals in themselves. Instead, we will build real confidence by testing our systems in environments that are even more hostile than production, so we feel safer in production than we do in our internal test environments.

The fruits of restoration

Imagine a team where everyone knows the exact properties and invariants of the system, because they live in markdown files alongside the repo, written in clear, declarative, language that’s both machine-checkable and human-readable. Those same files drive the exploratory tests that run continuously, so “what we expect” and “what we check” are literally the same thing.

Imagine you’re investigating a property failure that showed up in CI but not locally. Instead of trying to align the stars to reproduce it, your tooling has already found the minimal example that breaks the property. Your AI assistant, grounded in the properties and invariants you’ve made explicit, has narrowed the search space and surfaced a few likely causes. You reuse the seed from CI and trigger the exact same behavior locally, and start testing out solutions right away.

Imagine your fix requires a massive refactor of the cache layer. Safe in the knowledge that your existing properties and invariants capture what “correct” means for the system, you do the refactor and run your property‑based tests against the new code. Once those pass, you push to CI, where the same declarative tests are used to exercise the system under heavier load, more variation, and injected faults and slowdowns. When that passes, you merge and let the nightly runs go to work, using those same properties to explore far more scenarios and failure modes than your old imperative suites ever could. By morning, shipping to prod feels routine and safe, because anything production will throw at you is milder than what you’ve already seen.

In that world, tests stop being brittle gatekeepers and become the living contract of your system. Outages become rarer, refactors become less frightening, and you and your coworkers spend your time designing better systems instead of maintaining test scripts and firefighting on-call.

How much more could we build in that world? How much better would our systems be?

The call to action

The Declarative Revolution has already transformed how we build and run software, but the revolution is not complete. The last stronghold of the imperative world is testing.

The time for watching the Black Ships from the harbor is over. The path forward is clear, and powerful tools have already emerged: Hypothesis for property‑based testing, Schemathesis for exploratory API testing, Bombadil for exploratory UI testing, and Antithesis for deterministic simulation testing.

The fruits of this restoration are there for the taking: confidence in our systems, higher velocity, and systems that bend but don’t break.

Take a concrete step toward declarative testing and continuous correctness:

-

If you’re an individual contributor: For the next change you make, pick one part of it and express a property about it. Then use property‑based testing to exercise that property, instead of adding yet another imperative test.

-

If you’re a tech lead, architect, or manager: Ask your team to write down the key properties and invariants of the system: what must never happen, and what must always hold, so you’re all on the same page about what “correct” really means. Then look across your testing stack (unit, integration, end‑to‑end, and system‑level) and identify where those properties can be enforced declaratively. Finally, decide where declarative testing strategies with autonomous exploration can replace limited, brittle imperative suites.

We’ve already seen what happens when we apply declarative ideas to infrastructure and deployment. Now it’s testing’s turn. Stop accepting flaky, brittle, imperative test suites as the cost of doing business. Start insisting on tools and practices that move you toward declarative properties, machine‑driven exploration, and determinism.

And when you’re ready to see what true system‑level, property‑driven, deterministic testing looks like in practice, drop us a line to see if Antithesis is the right tool for you.