Antithesis report: Tigris Data

Introduction: Global consistency under chaos

Tigris is an S3-compatible object storage service with built-in global replication. Global replication is the default — objects written in one region are replicated to the nearest region as they are accessed, with no need for manual configuration. The system offers consistent performance globally.

Tigris tests their system extensively on a full multi-region cluster in CI. Their CI executes integration tests, S3 compatibility tests and race detection for every change. In addition, for third-party validation of system correctness and fault tolerance, they test all changes in Antithesis.

“Our tests pass” is one thing. Having an external adversarial system try to break their consistency guarantees under chaotic conditions is a stronger statement to make to users who trust them with their data.

Tigris’ system guarantees

Tigris deploys its full stack across multiple regions, with multiple FoundationDB clusters providing metadata storage and replication. Every object write is stored and queued for replication in a single FDB transaction. That transactional guarantee is what makes the architecture simple. It’s also what makes correctness hard: everything downstream, cache invalidation, data distribution, cross-region convergence, depends on the replication pipeline that follows.

Tigris guarantees strong consistency within each region, regardless of bucket type. Read-after-write, read-after-delete, read-after-update, list-after-write. You PUT an object, you GET it back immediately. You DELETE it, you get a 404. Conditional operations like If-Match always evaluate against the latest state.

Tigris also guarantees eventual consistency across all regions, with sub-second replication lag. Replication has to be resilient to partial failures. A failure at any stage, a retry that duplicates, a timeout where the outcome is ambiguous, any of these can cause regions to diverge. The system has to be correct when things fail, not just when everything works cleanly.

Metadata is pushed to every region automatically, but data is pulled on demand. When a user in one region requests an object uploaded in another, the local region fetches the data, caches it, and updates metadata so other regions know about the new copy. This process happens transparently.

The caching layer is one of the features that enables Tigris to achieve sub-second replication performance. The cache always reads from the closest region, so every write or delete must invalidate cache entries across all regions. Tigris uses eager caching, which adds additional replication paths — data eagerly cached to multiple regions creates multiple invalidation paths when deleted. Region restrictions can also create additional replication paths. If any of these replications are late or dropped, users could see stale data, violating the consistency properties.

Overall, the most important properties Tigris tests for are strong consistency within a region and eventual consistency across regions.

Testing at Tigris

Tigris runs a full multi-region deployment in their CI pipeline, not a single-cluster mock of a multi-region setup. The test cluster mirrors production topology exactly: stateless API gateways, distributed cache nodes, workers, multiple FoundationDB clusters, and block stores across multiple regions.

Their CI process includes integration tests, S3 compatibility tests, and race detection.

- Integration tests exercise the core operations end-to-end — CRUD, multipart uploads, bucket management, ACLs, lifecycle policy management. These run through the actual S3 API layer, just as a real client would.

- S3 compatibility tests validate that the API behaves like S3. This matters because users bring existing tools and SDKs expecting specific behavior. If ListObjects pagination works differently, things break.

- Race detection is on for all test runs. Race detector catches data races in concurrent code paths.

The multi-region CI setup also lets Tigris test replication end-to-end. To test replication and read-after-write consistency, the system writes in one region and reads from another, writes to multiple regions concurrently and verifies all regions converge to the same state. The integration tests cover read-after-write consistency across the deployment. Tests run against multiple gateways hitting different regional servers, so cross-region behavior is exercised on every PR.

However, prior to working with Antithesis, Tigris’ CI pipeline did not include fault injection. Tests ran under clean conditions, with a stable network, all regions healthy, and a responsive database. They only verified correctness under best case conditions, which cannot be assumed in production. Tests did not include scenarios like a region going down mid-replication, network partitions between clusters, or asymmetric load on databases in different regions. The failure combinations that cause real consistency bugs weren’t being explored.

Furthermore, the test scenarios in the CI pipeline were designed by Tigris’ engineers, so by definition, they cover only scenarios the team had thought of, a small subset of the chaotic conditions the system will encounter in production.

Antithesis

To address these gaps in their testing, Tigris uses Antithesis to systematically search for bugs that happen in unpredictable, unanticipated conditions, so called unknown unknowns. Antithesis is an autonomous testing platform that runs distributed systems in a simulation environment, with randomly generated inputs, under aggressive fault injection. Antithesis is essentially trying to explore the full state space of a complex distributed system, to see if undesirable states — bugs — are ever encountered.

The approach is essentially full-system property-based testing under fault injection. Users deploy a system to Antithesis, along with a client to exercise the system and all necessary dependencies. Antithesis exercises the system’s functionality using the provided client, while checking if the system ever violates the guarantees the user specifies.

Because the Antithesis simulation is fully deterministic, any property violations found can be reproduced and replayed on demand.

Tigris’ Antithesis setup

Tigris has been testing with Antithesis since July 2025. Their Antithesis deployment mirrors the multi-region setup they use in CI: stateless API gateways, distributed cache nodes, workers, multiple FoundationDB clusters, and block stores, all deployed across multiple regions identical to production topology.

Tigris began by adapting their standard CI integration test for use in Antithesis, and later wrote an Antithesis-optimized workload for testing with instead.

A standard integration test, even one as thorough as it could be, is still a scripted sequence of operations that asserts each one succeeds against a healthy system. The tests exercise every core operation end-to-end through the actual S3 API, validate compatibility with existing client tools and SDKs, and run a race detector on every path. But the fundamental model is the same: the test either passes or fails, there is no middle ground. These tests assume a healthy system. In Antithesis, tests that assert specific outcomes from specific regions break under fault injection, not because the system is wrong, but because a transient failure on the targeted region causes the test to interpret an ambiguous outcome as a failure. Error handling in typical integration tests is binary: operations succeed or fail. But in a distributed system under faults, there’s a third state: uncertain. A write might have succeeded on the server but the response got dropped. The test sees an error and flags it as a failure, but the object is actually there. Tests that can’t reason about ambiguous outcomes produce false negatives under faults, which makes them useless for finding real bugs.

Because Antithesis tests systems under fault injection, tests have to be written with a fundamentally different approach. Instead of asserting that a given sequence of operations should pass or fail, the test needs to observe the software running in faulty conditions, then verify that the user’s specified invariants hold.

To test in Antithesis, Tigris wrote test scripts that mirror end-user behavior: individual operations and sequences of operations like a read, a write, a read-then-write, and so on. These scripts are designed to behave the way a real client would under unpredictable conditions. simple test scripts handling individual operations or sequences of operations, e.g. a read script, a write script, a read then write script and so on. Importantly, the scripts are designed to tolerate faults, e.g. a 408 timeout is not interpreted as failure — the script understands the outcome is ambiguous and is able to handle both possibilities. Antithesis orchestrates Tigris’ collection of test scripts to run the software through hundreds of thousands of different scenarios. Each scenario is a random sequence of operations drawn from the collection of test scripts, happening under a random selection of faults generated by Antithesis.

In addition to the standard faults Antithesis uses, Tigris has added regional faults, simulating entire regions slowing down or going offline, not just individual processes. This exercises the system’s disaster recovery pathway, allowing the team to observe behaviors like:

- does the system continue service from remaining regions?

- does it recover correctly when a region comes back?

- do all the regions converge after recovery?

Regional faults are where the most interesting bugs surface. Single-process failures are relatively easy to handle. Losing an entire region means the replication pipeline, cache layer, regional servers all go down simultaneously, and the system has to handle that combination without losing data or breaking consistency.

Today, Tigris runs two sets of tests every night — a version of their normal integration test, and their Antithesis-specific workload. The integration test runs for ~1 hour each night, and the Antithesis workload for ~8 hours. Each test runs in parallel on 48 cores, and Antithesis records the exact duration-in-simulation of each test run.

Between July 2025 and March 2026, Tigris ran:

| Integration test runs | 330 |

| Antithesis workload runs | 261 |

| Integration test Virtual Hours | 18509 |

| Antithesis workload Virtual Hours | 54669 |

Antithesis also tracks the number of unique system states encountered in the simulation, and the number of executions explored. All figures for July 2025–March 2026.

| Total states explored | 20,373,041 |

| Total executions explored | 211,006 |

| Average states per run | 27,457 |

| Average executions per run | 284 |

They review test results almost daily, and have integrated Antithesis with Slack for notifications, and Linear for issue tracking. When issues are found, they’re converted to Linear tickets for code owners to triage.

Results

Using Antithesis, Tigris found and prevented a number of issues that would be nearly impossible to detect with traditional testing.

The cache coherence problem

The bugs found to date share a common root cause: cache coherence, the window between a metadata operation succeeding in FoundationDB and the edge cache reflecting that change.

This is a well-known class of problems in distributed systems. Any architecture that places a cache in front of a strongly consistent store creates a contradiction: the store guarantees that every read after a write reflects the latest state, while the cache guarantees that most reads never touch the store. Under normal operation, the two stay in sync because invalidations succeed and events arrive in order. Under failure, they diverge. Distributed systems literature refers to this as the cache coherence problem, closely related to the broader question of how consistency models define when changes become visible across components. In database systems, the analogous challenge is maintaining external consistency when a read path can bypass the serialization point.

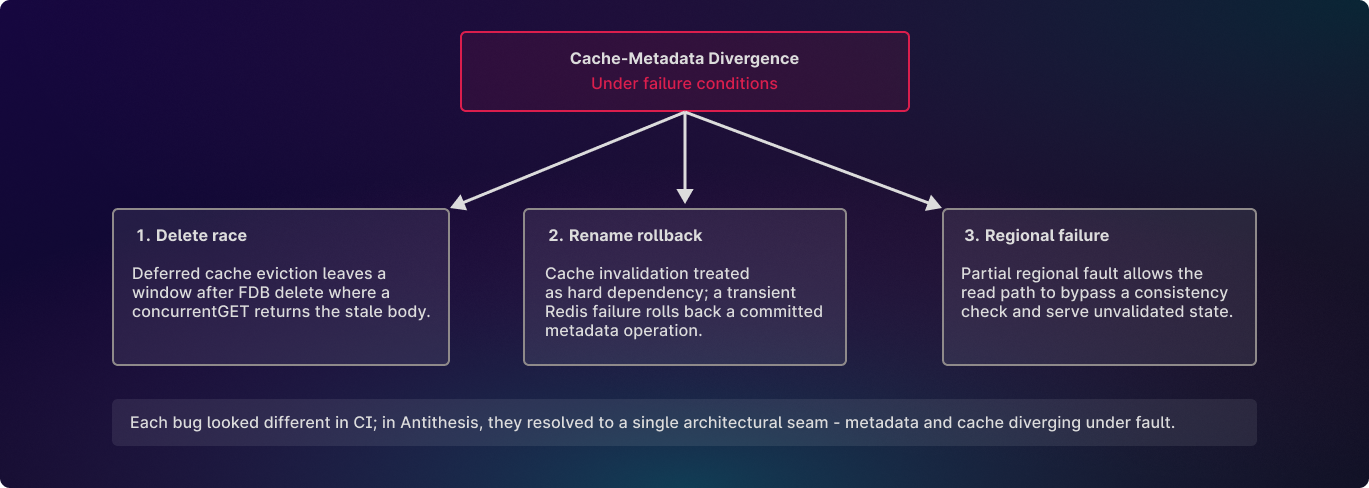

Antithesis surfaced three distinct manifestations of this divergence. All three were in common code paths constantly exercised by Tigris’ normal CI tests: deletes, renames, replication, cache reads. The code performed as expected in CI testing, but behaved in undesirable ways when code paths interacted under faults.

The delete-then-read race

A typical example of this class of bug is this delete-then-read race.

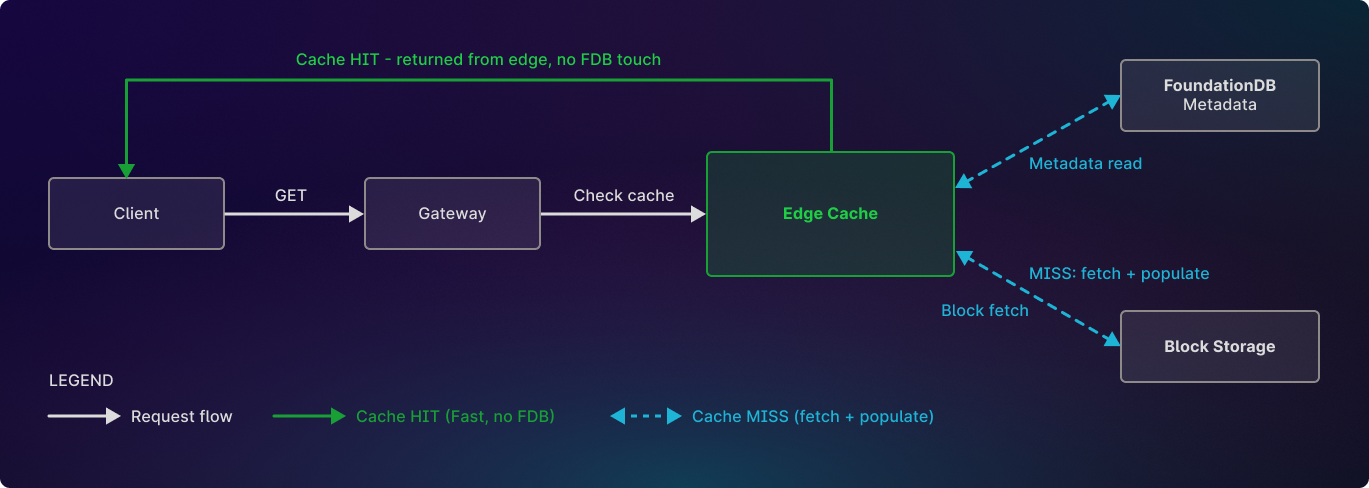

Tigris has a two-layer read architecture: an Edge cache sitting in front of FoundationDB metadata and block storage. When a client issues a GET, the gateway checks the edge cache first. If the object is cached, it is returned directly without fetching directly from the metadata store. This is what makes reads fast.

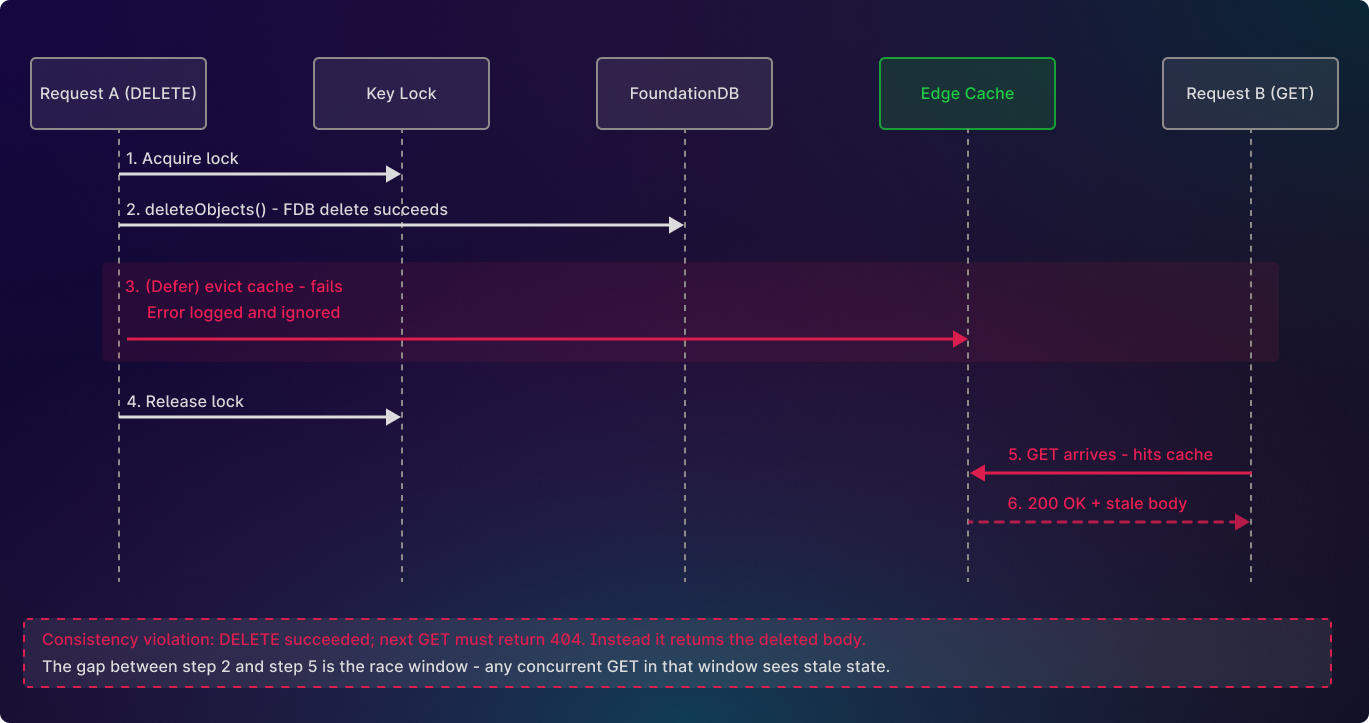

Initially, the code path for single-key DELETEs went through a function called deleteWithLock(). The function acquired a per-key cache lock, then called deleteObjects() which deleted the object from FDB. Cache eviction happened in a deferred cleanup step that ran after the FDB delete returned.

The sequence looked like this:

deleteWithLock(): 1. acquire key lock 2. defer release lock 3. call deleteObjects(): 3a. defer on success: DeleteWithTomb(key) → writes tombstone, then evicts cache 3b. commit FDB delete 3c. [3a fires as deleteObjects returns] 4. [deferred lock release fires as deleteWithLock returns]If step 3c failed, the DELETE still returned success. The FDB delete in step 3b had already committed, so far as the client knew, the object was gone. The failed eviction got logged and that was it. Step 4 released the lock, and the cache kept the old entry until async invalidation fired or its TTL expired.

After that, any GET for that key hit the cache and got back the deleted object with a 200. FDB said the object was gone, the cache said it was still there, and reads went through the cache. The DELETE succeeded but the next GET returned what was just deleted, and it kept returning it until the cache entry aged out on its TTL.

The eviction can fail for plenty of reasons. Tigris has two mechanisms that clear stale cache entries: an async invalidation task that fans out to every gateway after FDB commits, and a TTL on each cache entry that eventually ages it out. If the process crashes before the async task runs, or the task itself fails, or the cache call times out, the only mechanism left is TTL. FDB has moved on and the cache sits frozen in the past. The entry survives and every read during that window returns the deleted object.

The Antithesis delete-then-get workload caught this by issuing DELETE followed by an immediate GET on the same key from regional endpoints. Under fault injection the cache eviction sometimes failed, and the follow-up GET hit the edge cache and came back with the deleted object. This is a strong consistency violation: Tigris guarantees that a DELETE followed by a GET returns 404. The bug requires the cache eviction to fail silently while the FDB delete succeeds — a split outcome the code path had no way to detect or recover from.

No engineer would write a test for this interleaving because no engineer would think of it. Antithesis found it on the first run using the Antithesis-specific workload.

This is an instance of what the literature calls a stale read: a read that returns a value that does not reflect a previously completed write. In linearizable systems, stale reads are a safety violation, not a performance problem. The Jepsen testing framework classifies stale reads as a violation of Strong Serializability.

Other manifestations

Antithesis testing surfaced the same cache-metadata divergence in three other scenarios. Each one is a different angle on the same architectural seam.

Rename treating cache invalidation as a hard dependency. A rename in Tigris is an atomic copy-then-delete at the metadata level. The rename commits as a single metadata transaction in FDB. After the commit, the code invalidated the edge cache entry for the old key: and it treated that call as required for the rename to succeed. If a transient cache timeout caused the invalidation to fail, RenameObject returned an error to the client. Cache invalidation is best-effort by nature. A cache that fails to clear will eventually age out on its TTL. The fix was to stop treating it as a blocker. THe invalidation still runs in same path, still tries to tombstone the old key, but its error is logged and ignored.. The rename returns success once the FDB commit lands. If the cache call fails, the entry is cleared asynchronously.

Deleted objects resurfacing under regional failure. Under specific failure conditions involving partial regional faults, a deleted object could reappear on reads before the delete had fully settled across the region’s components. The fix tightened the read-path guarantees so the system validates before serving, even under degraded regional conditions.

None of these bugs surfaced in Tigris’ prior CI testing, which didn’t cover interactions between paths under failure, because it’s impossible to adequately cover manually. The delete race requires a concurrent GET arriving in a millisecond-wide window. The rename bug requires a transient cache failure during an async invalidation step. The replication bug requires out-of-order event delivery combined with a retry. The regional failure bug requires partial failures across the replication path at infrastructure level. These are operational conditions, not test conditions. CI tests each of these code paths and each path passes. The failure combinations are combinatorial and the timing windows are narrow, but they get surfaced by Antithesis’ exploration– even if they never appear in production.

Resulting architectural changes

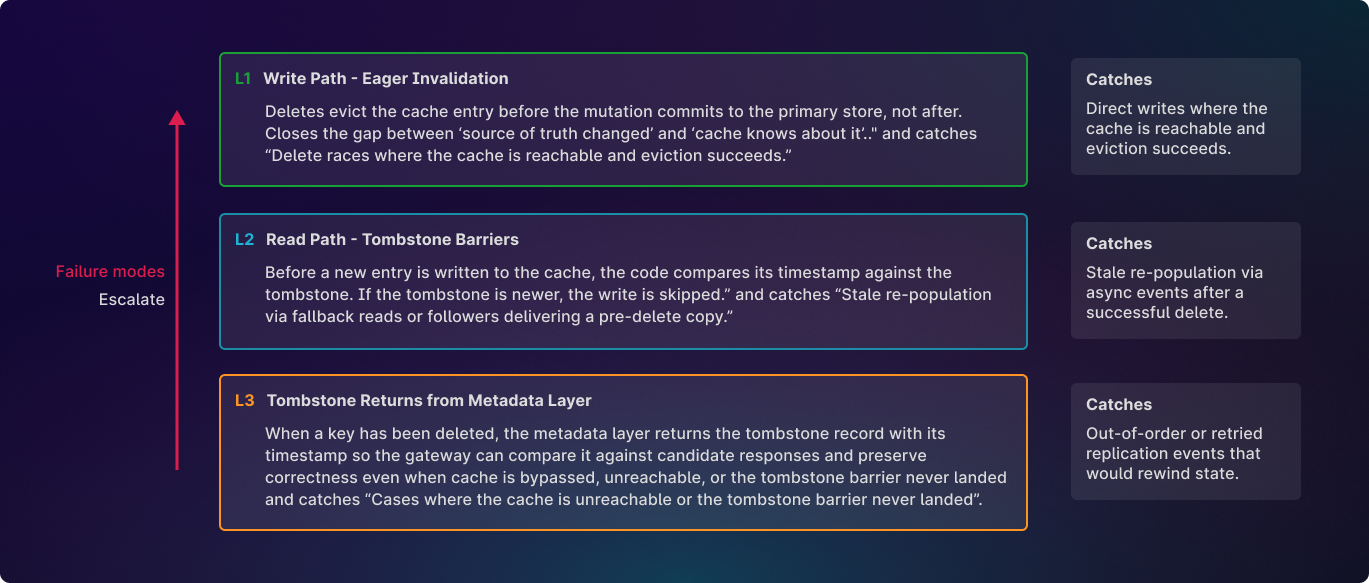

Antithesis helped Tigris to uncover these potential failure modes before they could impact customers, or even be observed in production. Tigris fixed each bug individually and verified their fixes in Antithesis. They also implemented changes in their read architecture to address the root cause. Beyond the individual fixes, they hardened the architecture with three independent defenses, so a single layer failing no longer allowed a stale read to reach the client.

The most visible change was on the write path. Instead of deleting from FDB first and evicting the cache in a deferred cleanup, the code now evicts the cache eagerly while holding the key lock, then deletes from FDB.

Before (broken):

delete(key): 1. acquire key lock 2. delete from FDB 3. (deferred) evict cache entry + write tombstone 4. release key lock ← If step 3 fails, cache still serves the deleted object until invalidation or expirationAfter (fixed):

delete(key): 1. acquire key lock 2. evict cache entry ← moved before FDB delete 3. delete from FDB 4. (deferred) evict again + write tombstone ← defense-in-depth 5. release key lock ← cache is cleared before FDB commits; the deferred step writes the tombstone that blocks stale re-population later

The eager eviction clears the cache before FDB commits, so any subsequent read finds the cache empty and falls through to FDB. The cache call error is swallowed intentionally: if the cache is temporarily unreachable, the FDB delete still proceeds. The deferred step still runs afterward and writes a tombstone, which blocks stale re-population from later reads.

The result is that a single cache failure no longer translates into a stale read. The eager eviction handles the common case. The deferred tombstone write backs it up. And if both of those fail, two or more layers sit below the cache to catch what slipped though. The cache is still a performance optimization. It just doesn’t need to be perfectly correct for the system to preserve consistency.

Tigris has not encountered a cache coherence bug since they made this change. They’ve done a deep-dive on their architecture changes here.

Key takeaways

Making cache coherence work under failure requires coordination across three layers, not just one. Tigris was testing portions of each layer before they started using Antithesis, but their testing was not giving them complete coverage across all code paths at every layer.

Write path: eager invalidation. Deletes have to invalidate the cache entry before the mutation commits to the primary store, not after. Any gap between “the source of truth changed” and “the cache knows about it” opens a window where a concurrent read serves stale data. Deferring invalidation to a cleanup step created exactly that gap, which is where the delete race lay in wait.

Read path: tombstone barriers. Eager invalidation handles the case where the cache is reachable and the eviction succeeds. It does not handle the case where a stale version shows up later via a fallback read to another region and tries to re-populate the cache. The tombstone is a barrier on cache writes, before a new entry is written, the code compares its timestamp to the tombstone, and if the tombstone is newer the write is skipped. Even if a follower delivers a stale copy, the tombstone keeps it from poisoning the cache. This is the second line of defense.

Tombstone returns from metadata layer. The tombstone barrier in cache is the first line of defense against serving deleted objects, but as part of this testing we also wanted to harden the path that does not rely on cache at all. In particular, when the gateway reconciles responses across regions and the local cluster no longer has the key, we need to ensure it does not select a stale copy from a remote cluster. In normal operation, this path is typically masked by the tombstone barrier in cache, which causes the request to resolve to a 404 before reconciliation ever becomes relevant. But this testing is intentionally focused on the cases where that protection is not present—for example, if tombstone barriers are not cached, or if the cache is bypassed entirely.

To make that path robust on its own, the fix extends the metadata response to return the tombstone record itself, including the delete timestamp, when a key has been removed. That gives the gateway enough information to compare the delete against remote responses, make the correct decision during reconciliation, and return the right result. In other words, cache remains the first barrier, but this ensures correctness even when tombstone barrier caching is disabled, skipped, or otherwise never comes into play.

Tigris’ CI tested each of these layers individually and each layer passed. What their CI did not test was the interaction between layers under failure: a delete that succeeded in FDB while the eager eviction was deferred, while a concurrent read arrived in the gap, while a replication event from a follower tried to re-populate the cache with the stale version.

The practical takeaway for teams running a cache in front of a consistent store is that the layers have to be tested individually, and the gaps between them tested under fault.

Ongoing efforts

Tigris continues to test with both their own CI setup and Antithesis.

Their next reliability initiative, as of April 2026, is to begin testing individual subsystems in isolation. Tigris’ Antithesis testing so far has focused on the system as a whole, exercised through the S3 API, while Antithesis injects faults and checks system guarantees.

Replication Pipeline

Tigris’ first target for component testing is their replication pipeline. Replication in Tigris spans metadata distribution, block distribution, and cache invalidation, and these happen independently. A write can have its metadata arrive in a remote region before the block data does, or the reverse. Under normal conditions the gap is small. Under faults, the gap widens, and the interactions between these paths are where ordering and delivery assumptions get tested. Tigris is beginning to use Antithesis to explore those interactions specifically, whereas their current setup explores them only in the course of end-to-end API testing.

Async Processing Layer

Tigris has background processes responsible for replication delivery, cache invalidation, tombstone cleanup, and lifecycle management. When one of these processes fails mid-operation, the system needs to recover cleanly. Partially completed work can’t be left in an inconsistent state, and the recovery path can’t duplicate side effects. These are hard properties to verify with scripted tests because the failure timing matters as much as the failure itself. Antithesis is well suited to this, and Tigris plans to begin testing them in Antithesis.

Regional Failover

Tigris supports multiple location types, from single region to globally distributed, and each has different consistency and availability properties. Having added regional faults to their Antithesis instance, they are now beginning to test their system’s inter-region failover behaviors. Does the system continue serving from remaining regions? Does replication catch up correctly when a region recovers? Do all regions converge to the same state afterward?